A clear and concise reference sheet on the EU AI Act is valuable for trade union representatives. This brief arms union reps with the tools to expose and curb unfair AI at work.

This brief arms union reps with the tools to expose and curb unfair AI at work.

The EU AI Act (Regulation 2024/1689) establishes the world’s first comprehensive framework for governing artificial intelligence, including stringent protections for workers subjected to algorithmic management. A clear and concise reference sheet on the EU AI Act is valuable for trade union representatives, as it equips them with the knowledge necessary to anticipate and challenge the impact of algorithmic management on workers. Given that AI systems increasingly influence hiring, evaluation, and daily management practices, accessible guidance enables representatives to safeguard fairness, transparency, and dignity at work.

Why GDPR matters

While the EU AI Act serves as the primary instrument regulating algorithmic management, the General Data Protection Regulation (GDPR) remains a foundational layer of worker protection, especially in contexts involving automated decision-making. Article 22 of the GDPR grants individuals the right not to be subject to decisions based solely on automated processing that significantly affect them, explicitly covering scenarios such as hiring, promotion, or dismissal driven by AI tools. Together, these frameworks establish a complementary protective regime: the AI Act governs the design and use of high-risk employment AI systems, whereas the GDPR ensures workers’ procedural rights, transparency, and recourse when automated decisions affect them.

Timeline of Enforcement of the EU AI Act

The EU AI Act will enter into force in stages. Understanding this timeline is essential for anticipating employer obligations and ensuring timely protection for workers.

February 2025 — Prohibitions and AI Literacy Requirements Apply

Prohibited AI practices, such as emotion recognition in workplaces and manipulative AI, become legally binding. Additionally, AI literacy requirements come into effect.

August 2025 — General-Purpose AI Model Obligations Apply

Requirements for general-purpose AI models, including documentation and risk controls, come into force.

August 2026 — Most High-Risk Obligations Apply, including Employment AI

This milestone is critical for workers. All obligations for high-risk systems, including recruitment tools, performance evaluation algorithms, task allocation systems, and dismissal-supporting AI, become enforceable. This encompasses full compliance with Articles 9 to 15, covering risk management, data governance, transparency, human oversight, logging, accuracy, and robustness.

August 2027 — Remaining Obligations Apply

The final provisions of the Act enter into force, completing the regulatory framework.

From August 2026 onward, trade union representatives should be ready to demand specific documentation from employers, such as risk assessment reports, data mapping inventories, and records of human oversight procedures. Identifying and requesting these concrete documents can serve as strategic bargaining triggers, helping unions prepare evidence-based dossiers to hold employers accountable and negotiate stronger protections.

Risk Management (Article 9)

To help turn this requirement into practical action, union reps can use the risk assessment process as an opportunity for dialogue. For example, during a review, representatives might ask questions such as: Which protected characteristics were tested for bias? How were risks of unfair treatment in hiring and evaluation identified? What steps were taken to ensure data quality and relevance? Who participated in the assessment, and were workers consulted? Are there clear procedures if a worker wants to challenge an AI-driven decision? By posing these types of questions, representatives can make risk management a concrete and constructive topic of engagement with employers.

Logging and Auditability (Article 12)

For example, in one case, log data provided a clear timeline showing that an automated dismissal decision was triggered by a data input error, allowing a worker to challenge and ultimately reverse the firing. (Spanish court annuls firing over AI-generated dismissal letter, 2024) Concrete examples like this show why early access to logs under Article 12 is essential and can motivate representatives to request this evidence as part of routine oversight.

Key Protections for Workers

The definition of a High-Risk Employment class

The EU AI Act defines AI systems used for recruitment, candidate screening, performance monitoring, or decisions affecting employment terms as high-risk. This classification ensures that employers cannot deploy such systems without fulfilling strict legal obligations designed to protect fundamental rights and prevent discriminatory or opaque decision-making. For example, employment-related AI systems—such as those used in hiring, evaluation, task allocation, promotion, or termination—are explicitly classified as high-risk under Article 6(2) and Annex III, Category 4, thereby triggering the Act’s strongest safeguards.

Core Safeguards (Articles 9–15)

- Risk Management (Article 9)

Employers must assess, document, and mitigate risks throughout the AI system lifecycle to reduce harms such as bias, discriminatory scoring, and unjustified termination.

- Data Governance and Bias Prevention (Article 10)

The Act requires high-quality, representative, and unbiased datasets for employment AI, thereby mitigating risks of discriminatory hiring or evaluation outcomes.

- Technical Documentation (Article 11)

Comprehensive system documentation supports accountability, facilitates worker consultations, and enables investigations into unfair algorithmic decisions.

- Logging and Auditability (Article 12)

AI systems must maintain detailed logs, enabling workers and regulators to audit decisions, contest outcomes, and identify systemic issues.

- Transparency (Article 13)

Workers gain visibility into how AI systems function, the data they use, and how automated decisions are made. This helps address hidden decision rules that would otherwise keep workers in the dark about how AI impacts them.

- Human Oversight (Article 14)

Employers must ensure meaningful human involvement in AI-driven decisions. Fully automated firing, rating, or disciplinary actions are prohibited, safeguarding worker dignity and due process.

- Accuracy, Robustness, and Security (Article 15)

High-risk AI must be accurate and resilient, reducing erroneous evaluations, misclassifications, and other harmful outcomes for workers.

Prohibited Practices Protecting Workers

The Act prohibits certain practices deemed to pose unacceptable risks to fundamental rights, safety, and public interests. These include:

- AI systems using subliminal techniques to manipulate behaviour.

- Exploitation of vulnerabilities of specific groups, including children and individuals with disabilities.

- Social scoring based on personal characteristics that results in discriminatory outcomes.

- Prediction of criminal behaviour based solely on profiling (Welcome to Matrix).

- Untargeted scraping for facial recognition databases.

- Emotion recognition in workplaces and educational institutions, except for medical or safety reasons. (Welcome to Blade Runner)

- Biometric categorisation to infer sensitive attributes, except for lawful law enforcement purposes; and

- Real-time remote biometric identification in public spaces for law enforcement. (Welcome to Robocop)

What Trade Union Reps Can Do?

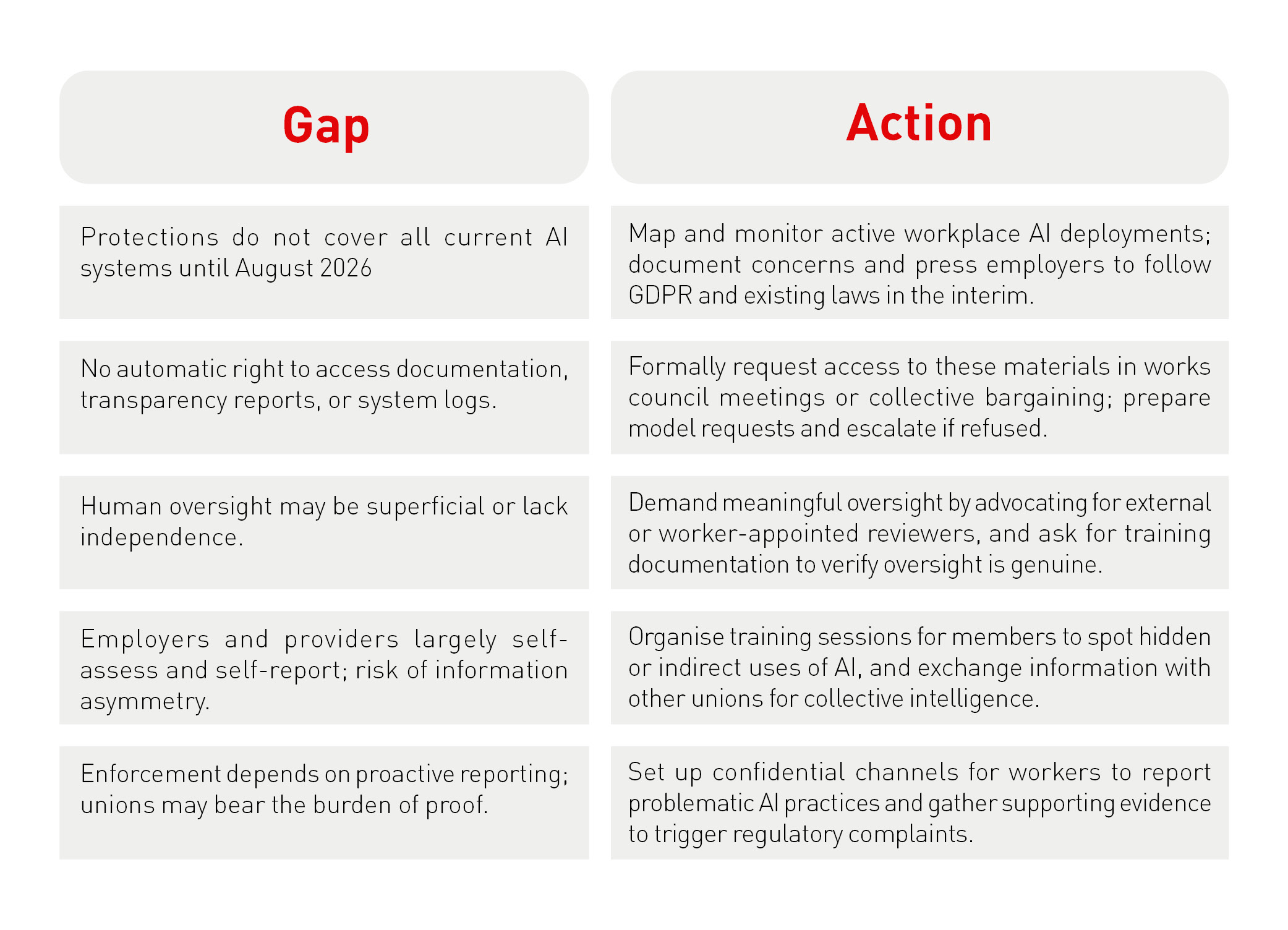

Despite the promise of robust protections in the EU AI Act, significant gaps remain. The Act offers unions new leverage to address algorithmic management, but its safeguards are not automatic or all-encompassing, and many protections take effect only in the future. This tension between promise and reality sets the stage: unions gain powerful legal tools, but the burden remains on representatives to secure real workplace change. Effective enforcement will depend on how unions use these provisions and how employers comply. To help union members feel both warned and empowered, it is important to pair each limitation of the Act with a practical, immediate organising step.

The table below outlines key gaps and corresponding actions representatives can take right now:

Inspired by The Why Not Lab, founded by Dr Christina Colclough, advisor to UNI Global Union on digitalisation and algorithmic management. https://www.thewhynotlab.com/services/toolkit

This approach enables trade union representatives to respond proactively to the Act’s limitations and turn legislative shortfalls into immediate areas for organising.

Representatives can rely on the Act’s strict obligations for high-risk employment AI. However, they must recognise that most provisions affecting workplace AI will only become enforceable from August 2026 onward, leaving many current systems unregulated in practice until then. During this interim, employers may adopt or expand AI systems that unions can contest only indirectly through the GDPR or existing laws, such as the Platforms Directive.

Although the Act requires documentation, transparency, and logging, it does not grant workers or unions automatic access to these materials. Requesting access will likely become a point of negotiation or conflict, particularly in institutions where employers claim confidentiality or intellectual property protections. While the Act’s transparency obligations apply to deployers, the extent of information workers will receive in practice remains unclear.

To anchor these negotiation challenges in concrete terms, union reps may propose specific bargaining clauses such as creating a joint algorithm review committee, requiring advance notification to unions prior to any deployment or major update of high-risk AI systems, or mandating a standing right for union-appointed experts to audit technical documentation and log files. Additional clauses could include requirements for employer-union co-drafting of transparency reports or joint oversight of algorithmic bias monitoring. By embedding these levers in works council agreements or collective bargaining, representatives can move beyond theoretical rights toward practical, enforceable access to information.

Human oversight requirements may appear reassuring; however, the term “meaningful human oversight” is vaguely defined, permitting tokenistic oversight or mere rubber-stamping of algorithmic decisions. The Act does not guarantee that the human reviewer will be independent, empowered, or trained to challenge AI outputs, despite the prior enforcement of general AI literacy obligations. Consequently, the “human-in-the-loop” clause risks remaining procedural rather than substantive.

To address this, union reps can go beyond confirming that human oversight exists and instead probe its quality. A simple checklist can help assess whether oversight is truly meaningful: Is the reviewer independent from the system provider or subject to conflicts of interest? Does the human reviewer have real authority to challenge or overturn AI decisions? Has the reviewer received adequate training to understand AI outputs and risks? Using such criteria, inspired by ethical audit frameworks, can help representatives push for substantive and effective oversight rather than mere box-ticking.

Furthermore, the Act’s risk and compliance-based framework places significant responsibility on employers and AI providers to self-assess, self-document, and self-monitor. Unions should anticipate asymmetries of information and power, particularly in complex workplaces where algorithmic management is embedded within proprietary systems. Even the prohibition of emotion recognition and other manipulative AI practices, though legally enforceable today, will require active monitoring and reporting by workers, with the burden of proof potentially resting on unions.

To ease this burden and foster a culture of participatory vigilance, unions are encouraged to build collective skills among members. One practical step is to co-create simple checklists or spotting tools that help identify workplace AI systems, questionable practices, or potential violations. By involving workers in designing these shared tools, unions can strengthen members’ confidence to detect, document, and raise concerns together. This collaborative approach turns monitoring from passive surveillance into active community learning, making workers key partners in securing compliance and advancing worker protection.

Finally, although Article 22 of the GDPR provides a crucial right to challenge fully automated decisions, this right is narrow, contested, and often poorly implemented in practice. Many employers argue that decisions influenced by AI—but not formally “solely automated”—fall outside the GDPR’s protections. AI-supported decisions in hiring or performance evaluation often blur this distinction, complicating workers’ ability to demonstrate when an AI system was decisive. (Capasso et al., 2025) For these reasons, the EU AI Act is not a panacea. It provides significant tools; however, their effectiveness depends on unions’ capacity to:

- Demand access to documentation, logs, and risk assessments, even when employers are reluctant.

- Monitor workplaces for hidden or indirect uses of AI that the Act may not explicitly capture.

- Negotiate collective agreement clauses that extend beyond the Act to address gaps in oversight, access to information, and worker participation. Use the GDPR strategically where the AI Act falls short or has not yet entered into force.

In summary, the EU AI Act opens new challenges/opportunities/backdoors; however, unions will require persistence, expertise, and collective pressure to realise these benefits. Regulations provide useful tools but do not guarantee outcomes. Trade union representatives remain essential in translating legal obligations into effective worker protection.

Emmanuel Wietzel

About the author

An experienced trainer for trade unions (CGT, ETUI, EPSU, USF, FERPA, Eurocadres), Emmanuel Wietzel has been supporting social movement actors in analysing European issues for many years. As an advisor on trade union issues, he brings his expertise to bear on collective strategies to address changes in the world of work. He is currently focusing his attention on the impact of AI on work. He is also working on the renewed role of trade unionism. This dual experience, both practical and analytical, informs his contribution to debates on the future of work and collective representation in Europe.