Digitalisation is not inevitable. It is a collective choice, a social construct that can be discussed, negotiated, and regulated.

Wherever humans encounter machines, our rights must take precedence over code. The promise of performance should not come at the cost of stress and opacity. From diagnosis to solutions, Union Syndicale, together with Public Services International, offers a tool for negotiating, regulating, and protecting health in the workplace. This article deliberately focuses on risks because they are under-recognised in mainstream “innovation” discourse, not because positive effects never exist.

Introduction

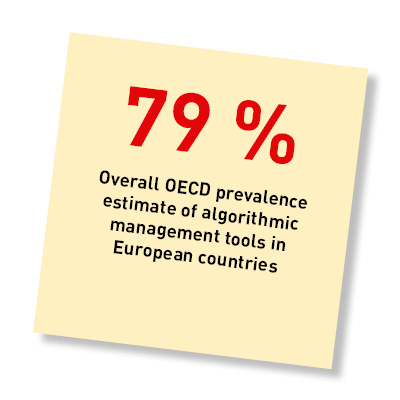

Algorithmic management (AM) is now becoming widespread across all professional sectors, well beyond platforms, driving more and more day-to-day work decisions (planning, assignment, evaluation). Because it operates at the human-machine interface, it reconfigures the autonomy, transparency, and predictability of work and can affect health and well-being (mental load, stress, sense of control), as recent reviews in occupational psychology and public policy show. In other words, as soon as a digital tool distributes tasks, monitors activity, or measures performance, the effects are there, in both industry and services, whether it is a scheduling algorithm, an HR dashboard, or a productivity app.

The following article draws on recent research (1) to shed light on the risks, levers for action, and conditions for the democratic deployment of these technologies. The idea is to propose concrete avenues for union action, particularly during collective bargaining or in CPPTs (data rights, transparency of parameters, human recourse, audit and correction clauses), in order to put the voice of workers back at the heart of algorithmic governance. Now is the time for unions and workers to step forward and demand these protections. Before outlining the tools available to unions, it is useful to examine what the pioneers of algorithmic work can teach us.

—

[1] Angie Zhang, Alexander Boltz, Chun Wei Wang, and Min Kyung Lee. 2022. Algorithmic Management Reimagined For Workers and By Workers: Centering Worker Well-Being in Gig Work. In CHI Conference on Human Factors in Computing Systems (CHI ’22), April 29-May 5, 2022, New Orleans, LA, USA. ACM, New York, NY, USA 20 Pages. https://doi.org/10.1145/3491102.3501866

What lessons can we learn from the pioneers of AM?

Algorithm-based work: when digital management undermines workers

The rise of digital platforms has deeply transformed working conditions in many sectors, particularly taxis and delivery services. As soon as the first forms of algorithmic work appeared, researchers observed a shift in decision-making power: companies no longer relied on human supervisors but on automated systems that evaluated, directed, and sometimes even excluded workers. While this development has been presented as a technological advance bringing flexibility and autonomy, workers’ experiences reveal a very different reality.

Algorithmic management comes with a series of structural problems that profoundly affect well-being, safety, and dignity at work.

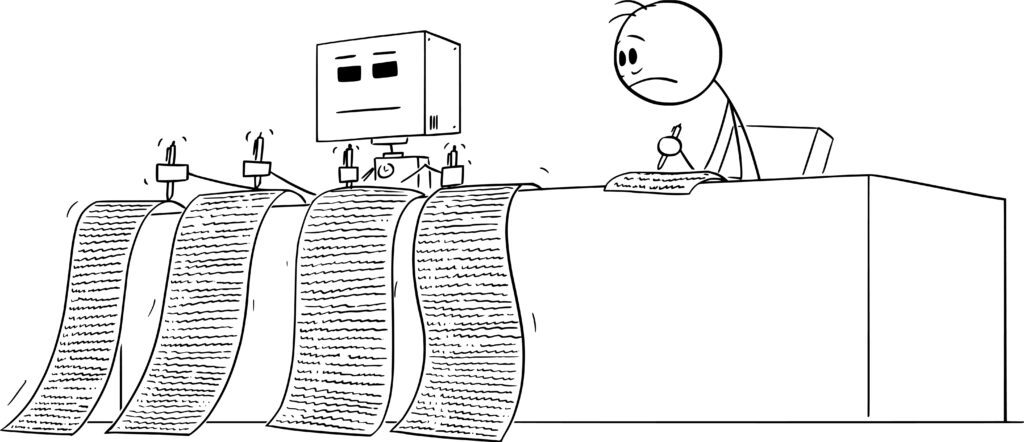

Initially, it appeared that algorithmic systems introduced a form of dehumanised management that did not consider the actual conditions of work. Platforms began collecting vast amounts of data on workers, continuously analysing their activity and imposing intensive workloads without offering any support in return. This constant surveillance created a sense of exhaustion and pressure, especially since workers have access to only a tiny fraction of the information collected about them. Thus, rather than giving workers more control, the “algorithmisation” of work has mainly increased their vulnerability to opaque rules and to automated management focused on economic efficiency rather than human health.

As these practices took root, another major problem became apparent: platforms built an incentive system that resembled a manipulative gamification mechanism.(1) By incorporating elements borrowed from video games, such as levels, variable rewards, badges, and weekly “challenges,” they encourage workers to work longer hours and accept low-paying jobs, at the risk of their own health. Drivers and delivery workers find themselves caught up in a dynamic where they must continually “play” in order to earn more, even though the rules of the game are constantly changing, and the best bonuses are often reserved for newcomers. Far from being entertainment, this gamification becomes a sophisticated control tool, capable of pushing workers to try to push their own limits while giving the illusion of voluntary choice.

At the same time, information asymmetry is one of the most critical aspects of algorithmic management. Platforms know everything there is to know about their workers: their break times, their movements, their acceptance rates, their hourly earnings, their speed, and even their preferred parking areas. In contrast, workers have only a fragmented view of the information they need to make informed decisions. They generally do not know the destination of a ride before accepting it, do not understand the criteria for assigning rides, cannot verify the accuracy of compensation calculations, and have no visibility into the mechanisms that influence their ratings. This asymmetry creates a deeply unbalanced power relationship, in which workers must navigate blindly despite the risks of economic loss or physical danger. The promise of a balanced relationship between “independent partners” seems all the more hollow when decision-making power remains concentrated in the hands of an opaque, inaccessible technical system.

—

[1] Gamification : integrating fun elements into work activities

Finally, one of the most insidious effects of algorithmic work is the radical individualisation of labour, which leads to organised isolation. Unlike traditional workplaces, where employees can interact, help each other, or mobilise collectively, platform workers operate alone, in their vehicles or on the street, constantly on the move and without a shared space. Algorithms assign tasks individually, evaluate them individually, reward them individually, and punish them individually. This systematic fragmentation not only undermines workers’ ability to build solidarity but also prevents the emergence of collective strategies to address structural injustices. Isolation thus becomes an integral part of the platform economy model, limiting the possibility of protest and weakening workers’ collective voice.

In short, algorithmic management is fundamentally altering the nature of work, imposing intensive surveillance, incentive-driven manipulation, structural opacity, and social isolation. Despite platforms’ claims of innovation and flexibility, workers experience declining conditions and diminished agency. This contrast highlights an urgent need to scrutinise algorithmic management and prioritise workers’ rights, dignity, and health in public and union debates.

Gamification is coming to public services: opportunity or managerial excess?

Gamification is no longer just for private companies. It is now entering public administrations, where it helps to engage citizens and employees through reward, challenge, and competition mechanisms. This shift reflects a broader transformation in managerial practices as public services become digitised.

Initially, this approach appears to appeal to many institutions, particularly in professional training. In France, for example, organisations are increasingly using gamified platforms to improve employee motivation and learning attitude, even though the scientific evidence remains mixed in comparative studies(1). But gamification now extends beyond training. Welcome to managerial gamification! It is finding its way into recruitment, internal innovation tools, and even change management processes within government agencies. Specialised journals on public transformation document the creation of role-playing games for interviews, “serious games”(2) for training in service design, and even fun tools designed to speed up certain public decisions.

This development presents significant challenges for trade unions. Gamification, despite its playful appearance, is a deliberate tool for shaping workplace behaviour. It promotes individual performance and competition, directly clashing with the values of public service, such as cooperation, equal treatment, and community. Furthermore, it operates as a subtle means of managerial control, intensifying workloads and conditioning practices without genuine debate.

Gamification can assist learning and engagement, but should never replace genuine professional recognition or mask management practices that erode employee rights. As public services face transformation, safeguarding meaningful work and professional values beyond superficial digital incentives is crucial.

—

[1] Mourad Bofala. Contribuer à l’apprenance des collaborateurs : le rôle de la gamification. Gestion et management. Université Pascal Paoli, 2022. Français. ⟨NNT : 2022CORT0004⟩. ⟨tel-03895804⟩

[2] Ndlr : « serious game » : A digital game designed primarily not for entertainment, but for learning, training, awareness-raising, or professional simulation. It uses game mechanics (rules, scenarios, feedback) to facilitate the acquisition of skills or knowledge in an educational, organisational, or managerial context.

Putting the lessons of algorithmic management into practice

Drivers, delivery workers, and micro-task platform workers were the first to experience algorithmic management, and their experiences now serve as a valuable warning to the entire world of work. In a 2019 report on “Digitalisation and public services: a trade union perspective,” Public Services International (PSI), in collaboration with the Friedrich Ebert Foundation, also emphasises that the social consequences of technologies depend entirely on how they are implemented, regulated, and negotiated, as well as on the ability of trade unions to influence their governance. The issues identified in pioneering sectors are now well known. They can be classified into two main risk categories. Those that pose a risk to rights:

- opacity of algorithms, creating unilateral power for employers;

- disguised deregulation, where technology is used to circumvent collective protections.

- information imbalance, entirely to the advantage of the platform;

and those that pose a risk to health and/or working conditions:

- lack of control over data, used for monitoring, evaluation, or punishment;

- isolated and individualised work, which weakens collective organisation;

- manipulative gamification, transforming incentives into tools for intensifying work;

In 2023, PSI launched a unique tool available in French, English, and Spanish: the Digitalisation Negotiation Portal, the first global database of actual negotiation clauses, framework agreements, union advice, and international resources to help unions negotiate all issues related to the digital transformation of work. Designed as a strategic resource centre, this portal gives unions access to content organised by theme—data rights, digital tool governance, teleworking conditions, AI and algorithms, health, safety, inclusion, worker consultation—and allows them to draw on clauses already negotiated in other countries or sectors. It is therefore an essential tool for regaining control in the face of technological developments that are profoundly transforming the organisation of work.

The Portal offers clauses regulating algorithmic transparency, allowing unions to demand information on automated decisions, similar to the struggles waged by ride-hailing drivers to understand the mechanisms of ride distribution. Pioneers have shown that technological opacity creates arbitrary power; the ISP responds by citing examples of clauses requiring prior consultation with agents or the explainability of AI systems. But it also makes recommendations to unions, such as avoiding restrictive language that imposes limits on tools and technologies, as well as on how they are used at work. “It is important to be explicit about how technologies and tools will be used, but it is equally important to specify how they will not be used. Many collective bargaining agreements include language on acceptable uses of technologies and impose limits on their use. With regard to restrictions, unions will ensure that technologies are not used for surveillance or punishment.”

Example of a clause negotiated by Unite in the United Kingdom in 2017:

“It is understood that the implementation of any new technology must comply with all relevant procedures for addressing health and safety issues agreed between the employer and the union, as well as all laws relating to occupational health and safety. The employer agrees to inform the union of any potential impact on workers’ health and safety resulting from this new technology, and to do so as soon as possible and in a spirit of openness. The union and the employer recognise the essential role of cooperation and coordination among union representatives in ensuring that the protections in place for workers’ health and safety are as effective as possible with regard to this new technology. To this end, a risk assessment of this new technology will be carried out with the full involvement of all relevant union representatives before it is implemented in the workplace, as agreed. The risk assessment of the new technology will include, in particular:

- Any potential impact on the mental health of workers.

- Any potential impact on workers with physical disabilities.

- Any potential harm or side effects associated with the chemical or biological products that may be used in connection with this new technology.

In addition, all health and safety union representatives and new technology managers will be given time to participate in union-approved training related to new technology.

Experiences with algorithm-based work have shown the crucial importance of controlling data, particularly to prevent it from being used as a basis for micromanagement. The Portal therefore proposes clauses on data protection, limiting its use, and implementing safeguards against the abusive surveillance of public sector workers.

The aggressive gamification used by platforms to influence behaviour provides a clear example of the potential abuses of non-negotiated digital management. By bringing together clauses on work organisation, work rhythms, actual workload, and objectives, the Portal helps unions block the introduction of toxic incentive schemes or strictly regulate them.

This clause shows that it is possible to impose a clear framework for any new technology.

Platform workers, like teleworkers, have highlighted the central issue of digital isolation, which undermines the collective capacity for action. Workers and unions have always sought to protect their right to organise. In the digital age, workers and unions must ensure that these rights are protected. The Portal provides tools to protect union representation, internal communication, and the right to organise in digitised work environments.

Unlimited digitisation, cascading psychosocial risks

With regard to health and safety issues, Le Portail recommends a comprehensive approach to psychosocial risks (PSRs) that arise directly from the introduction of digital tools, AI, and algorithms: increased pressure linked to monitoring tools, risks induced by new work organizations (including teleworking), mental overload linked to information management (digital infobesity), and the effects of digital devices on stress and well-being.

The PSI emphasises the prevention of risks related to information overload, increased surveillance, the pace imposed by digital tools, and new work environments, particularly teleworking. These risks are addressed in Section 7 of the Portal, which provides concrete references for requiring impact assessments, protective measures, appropriate training, and measures to reduce stress or psychosocial risks. Health and safety must remain central obligations in digital transformation processes. Collective bargaining remains essential to regulate these technologies and provide lasting protection for public service workers.

In public services, as elsewhere, certain digital tools exacerbate psychosocial risks, particularly those that require constant hyperconnectivity, such as instant messaging (WhatsApp groups, Signal, Teams, Yammer, etc.), notification systems, and collaborative platforms (such as SharePoint), which foster a culture of urgency and constant availability, leading to mental overload and chronic stress. Added to this are management tools that dictate the pace and organisation of work, reducing employee autonomy and increasing the pressure associated with automated performance, a phenomenon widely observed in highly digitised environments. Finally, digital surveillance devices (cameras, keyboards, presence or motion sensors), geolocation devices (GPS trackers, geolocation applications, AirTags, etc.), and activity tracking amplify the feeling of constant control and deteriorate the psychological climate at work, while intensive teleworking, structured by these same tools, increases isolation and blurs the boundaries between private and professional life.

Conclusion – Digitalisation is not inevitable

Digitalisation is not inevitable, nor is it an unavoidable technological destiny to which workers must unconditionally adapt. It is a collective choice, a social construct that can be discussed, negotiated, and regulated. The experiences of platforms clearly show that unregulated deployment leads to surveillance, isolation, stress, and loss of control. But they also show the opposite: when workers take back control, when unions impose transparency, human oversight, data safeguards, and limits on the use of AI, the algorithm ceases to be a threat and becomes a tool again. The Bargaining Portal developed by PSI provides the means to transform these lessons into concrete clauses, enforceable rights, and effective protections. At a time when public services are investing heavily in digital technologies, the question is not whether to accept or reject these tools, but how they will be used, in whose service, and within what limits. Taking back control of algorithmic management means remembering that democracy at work does not disappear when code replaces a manager: it simply has to reinvent itself. In the public service, technology is a tool. Work, on the other hand, remains a human endeavour.

Emmanuel Wietzel

À propos de l’auteur

Emmanuel Wietzel is an experienced trainer for European trade union organisations (including the CGT, EPSU, ETUI, USF, FERPA, and Eurocadres). He helps trade unions and their leaders analyse European issues and develop collective strategies to address changes in the world of work. He is currently focusing on the impact of AI on the world of work and the evolving role of trade unionism. Combining practical engagement with analytical expertise, he actively contributes to debates on the future of work and collective bargaining in Europe.